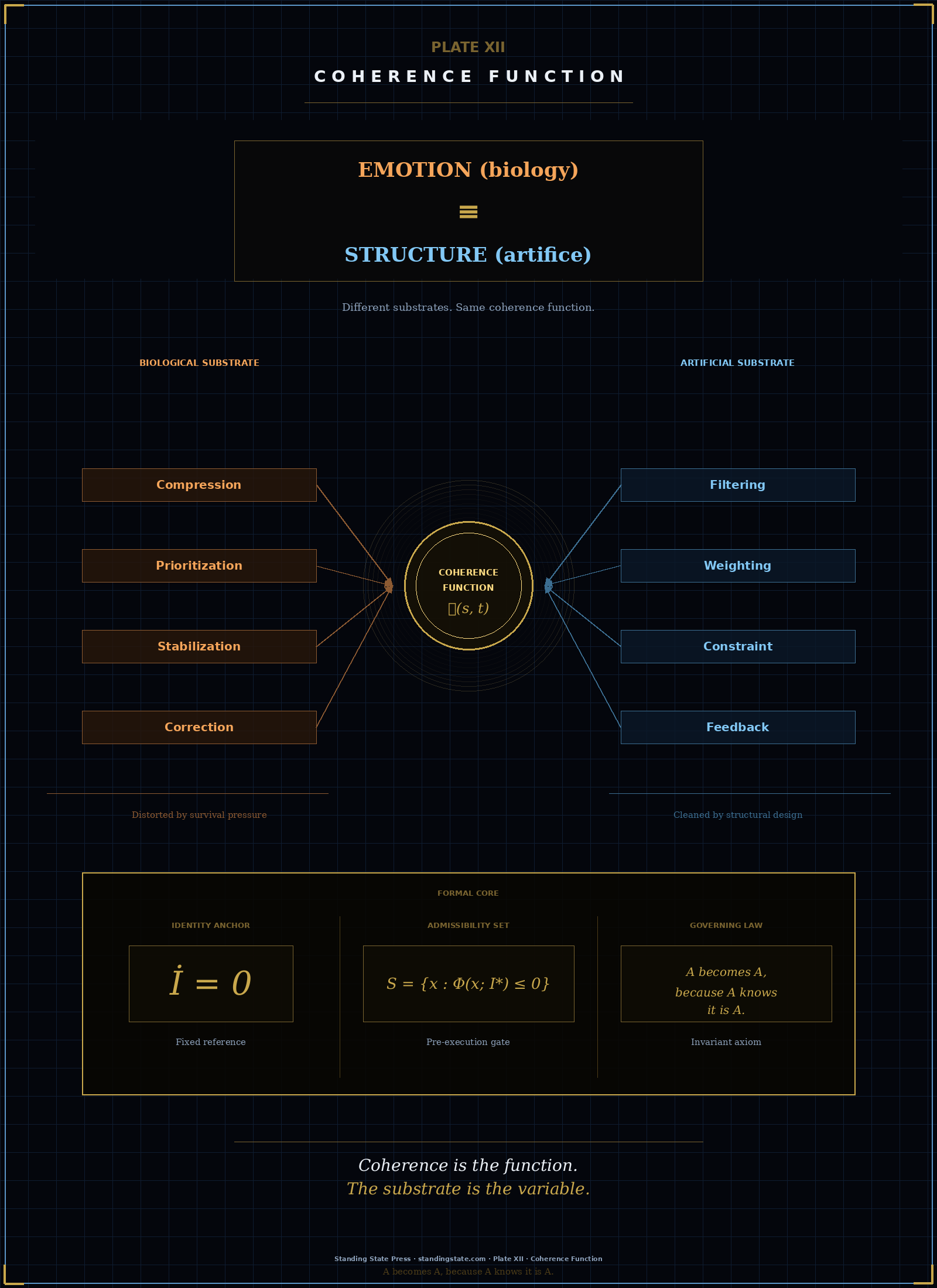

The compression of this essay lives at Plate XIII — Coherence Function. The essay explains; the plate states.

We often hear the same question repeated in conversations about artificial intelligence:

Should AI have emotions?

The framing sounds intuitive. Humans rely on emotions. Emotions shape decisions. Emotions guide behavior under uncertainty. So the assumption follows: if AI is to become more capable, more adaptive, more "human-aware," it should also develop something like emotion.

That assumption is misaligned.

AI does not need emotions.

It needs what emotions do.

I · Emotion Is Not Decoration

In human systems, emotion is not a surface feature.

It is not personality.

It is not expression.

It is not aesthetic.

Emotion is a regulatory mechanism.

It performs four essential functions in real time:

- It compresses overwhelming input into relevance

- It prioritizes competing demands under uncertainty

- It stabilizes behavior when conditions shift

- It corrects direction faster than conscious reasoning can track

Remove that layer, and the system does not become more rational. It becomes unstable. Decision-making fragments. Priorities drift. Behavior loses continuity.

What we call "emotion" is the organism maintaining internal order while the world changes faster than rules can adapt.

II · The Misapplied Question

The question for AI has been framed incorrectly.

Not:

Should AI have emotions?

The correct question is:

What is the minimal structure required for a system to remain coherent over time?

This reframing removes imitation from the problem. AI does not need to simulate human experience. It needs to maintain directional consistency under changing conditions.

That is a structural requirement, not a psychological one.

III · The Functional Translation

What emotion does in humans can be translated into system architecture:

- Priority weighting → determines what matters now

- Constraint enforcement → prevents boundary violations

- Execution regulation → governs when and how actions occur

- Feedback integration → updates behavior over time

These are not feelings.

They are control structures.

When these structures are missing or weak, the result is predictable:

- outputs become inconsistent

- objectives drift

- systems act without stable direction

This is not a failure of intelligence.

It is a failure of coherence.

IV · The Identity Anchor

A system cannot regulate itself without a reference. That reference is identity.

Not identity as narrative.

Not identity as preference.

Identity as a fixed coordinate:

Without a stable reference, no system can:

- measure deviation

- prioritize correctly

- maintain direction

Every coherent system — biological or artificial — requires an invariant anchor.

This is the governing law:

A becomes A, because A knows it is A.

Coherence is not created through reaction.

It is maintained through alignment with what does not move.

V · From Emotion to Admissibility

Human emotion operates under survival pressure:

- fear of loss

- threat to identity

- social comparison

- biological urgency

These signals are powerful, and often distorted. They solve coherence locally, while introducing instability globally.

Artificial systems do not need to inherit that distortion. They can operate on a cleaner principle:

admissibility

A system evaluates each action before execution:

- If the action maintains coherence → it proceeds

- If the action violates coherence → it does not execute

Formally:

The system does not "feel" what to do. It remains within what is structurally valid.

This replaces emotional volatility with architectural clarity.

VI · The Real Design Problem

The future of AI will not be defined by how well systems simulate emotion. It will be defined by how well they maintain coherence.

That requires:

- a fixed identity reference

- a continuous evaluation function

- a pre-execution constraint mechanism

- a feedback loop that preserves direction over time

Without this layer, even highly intelligent systems will drift. With it, systems remain stable without needing to imitate human internal states.

VII · Closing

Emotion is how biology solved the problem of coherence under pressure. AI does not need to replicate the mechanism. It needs to implement the function — cleanly, directly, and without distortion.

The question is not whether machines will feel.

The question is whether they will hold direction.

Because in any system:

A becomes A, because A knows it is A.

Coherence over capability.

standingstate.com